ActiveJob¶

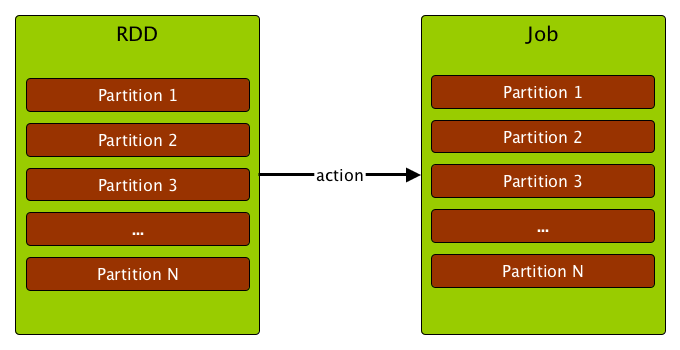

ActiveJob (job, action job) is a top-level work item (computation) submitted to DAGScheduler for execution (usually to compute the result of an RDD action).

Executing a job is equivalent to computing the partitions of the RDD an action has been executed upon. The number of partitions (numPartitions) to compute in a job depends on the type of a stage (ResultStage or ShuffleMapStage).

A job starts with a single target RDD, but can ultimately include other RDDs that are all part of RDD lineage.

The parent stages are always ShuffleMapStages.

Note

Not always all partitions have to be computed for ResultStages (e.g. for actions like first() and lookup()).

Creating Instance¶

ActiveJob takes the following to be created:

- Job ID

- Final Stage

-

CallSite - JobListener

-

Properties

ActiveJob is created when:

DAGScheduleris requested to handleJobSubmitted and handleMapStageSubmitted

Final Stage¶

ActiveJob is given a Stage when created that determines a logical type:

- Map-Stage Job that computes the map output files for a ShuffleMapStage (for

submitMapStage) before any downstream stages are submitted - Result job that computes a ResultStage to execute an action

Finished (Computed) Partitions¶

ActiveJob uses finished registry of flags to track partitions that have already been computed (true) or not (false).